Published on: April 26, 2023 Updated on: February 1, 2024

What is Artificial Intelligence? The Four Different Subsets Of AI

Author: Daniel Coombes

An overwhelming 91.9% of the public has at least heard of artificial intelligence. However, shockingly 39.5% state they only have limited knowledge of this revolutionary technology. Too often, the general public, misguided by science fiction and fear-mongering, views this tech as intelligent machines or sophisticated computer systems that will eventually replace humanity.

We want to dive head-first into the truth of what artificial intelligence is by looking at the numerous different subsets that are the building blocks of this incredible technology. Come with us as we take a look at the following four pivotal types of artificial intelligence:

- Machine learning

- Natural language processing

- Deep learning

- Robotics

The different subsets of AI

Powering most of the advanced computer programs and robotics that are revolutionizing the modern world are distinct subsets of AI. In this section, we will take a look at four of the most important types of AI and discover how exactly they work.

1. Machine learning

The human brain is undeniably incredible; we are able to store information and memories, which would equate to about 2.5 petabytes. If that does not sound impressive, think again because that translates to an unbelievable 2.5 million gigabytes of data.

Machine learning is a subset of artificial intelligence and computer science that focuses on recreating the power of the human brain. It is undeniably the central pillar of AI technology and can be found in almost every application of artificial intelligence.

This subset utilizes training data sets and machine learning algorithms to effectively mimic human intelligence and our capabilities for learning. The ability of these AI systems to effectively learn with minimal human intervention allows these programs to continuously evolve and improve.

There are three distinct models of machine learning algorithms. The first is called supervised learning. This type takes labeled data sets to generate reasonable responses to unknown inputs. For instance, if we provided the machine with historical data of images labeled ‘cats’, the algorithm would then be able to differentiate between images of felines and different animals in the future.

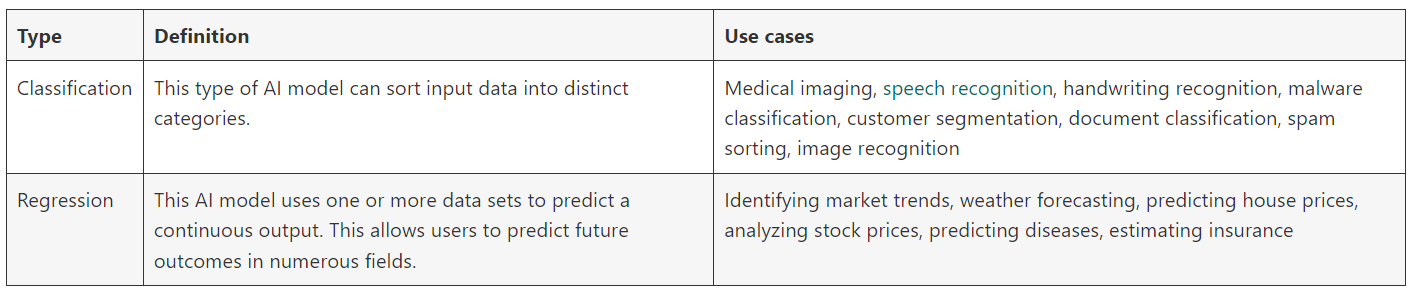

There are two central use cases for this type of machine learning: regression and classification. Take a look at the handy table below for the definitions and real-world use cases of these two AI models.

The second type of machine learning is referred to as unsupervised learning. This type analyzes unlabeled data sets and seeks to identify patterns or structures.

The main application of this artificial model is called clustering, which groups data points with similarities together. The use cases of clustering include; solving complex problems, filtering spam emails, identifying fraudulent activity, personalized marketing, and analyzing documentation.

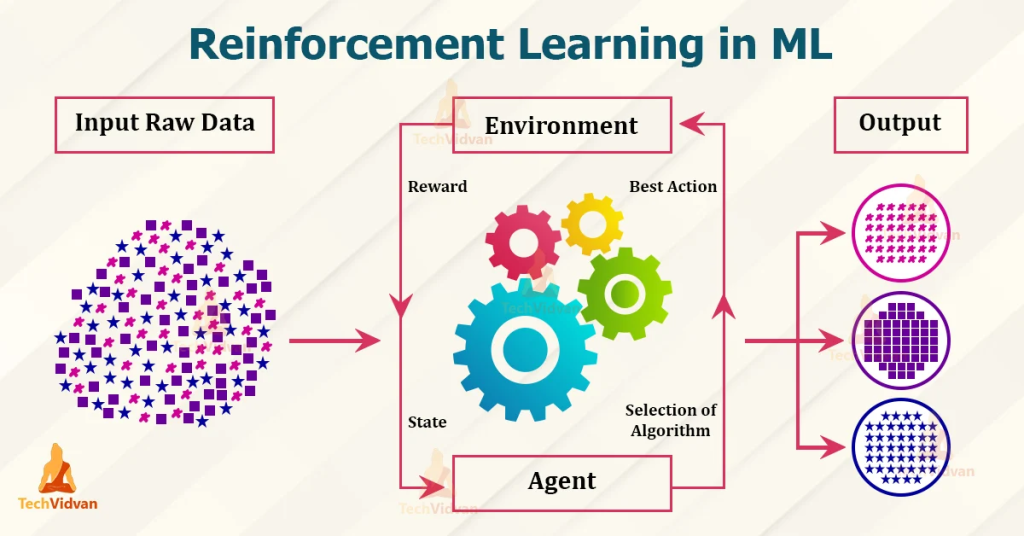

The final subset of machine learning models is called reinforcement learning which is the type most similar to a human being’s natural intelligence. In this scenario, the artificial intelligence model learns through trial and error about a specific goal.

The AI strives to be constantly rewarded for desired outputs, meaning that it will continuously learn strategies to minimize the chances of a negative outcome. This model can be used for autonomous vehicles, understanding language, targeted marketing, and the automation of robots.

2. Natural language processing

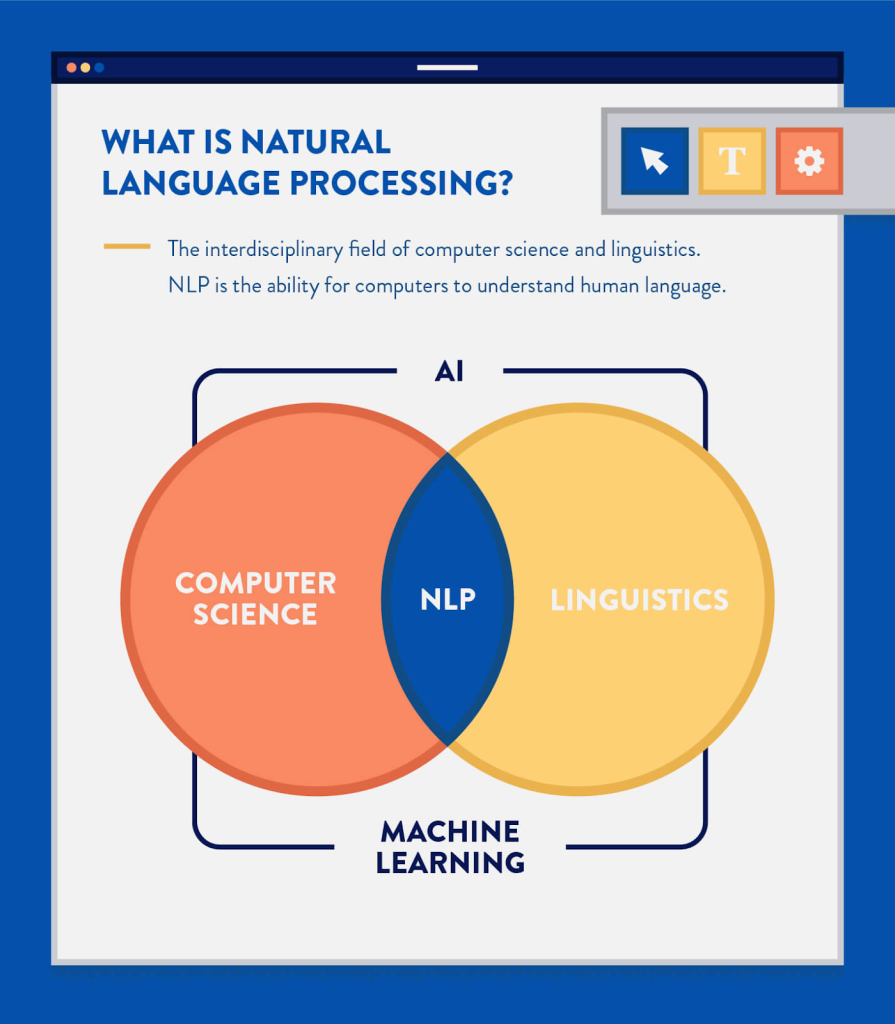

Our next item on the list of the subsets of AI is natural language processing, often abbreviated to NLP. Natural language processing is the overlap between the fields of computer science and linguistics that allows artificial intelligence to understand human language.

The model uses text vectorization to translate words into code that is understandable to a machine. The algorithm is then provided with masses of training data and tags, which it will then use to understand coded inputs and relevant outputs. This data will then be used as a ‘knowledge base’ to generate responses to unknown user inputs.

This type of model can achieve a multitude of tasks, including:

- Speech recognition: This is the act of translating speech to text which includes understanding a multitude of different factors such as accents, emphasis, incorrect grammar, and speed of dialogue.

- Tokenization: This is the process of securely translating sensitive information into identification symbols.

- Dependency parsing: Analyzes the grammatical structure by considering the dependencies between the different components of a sentence.

- Constituency parsing: This involves breaking down a sentence into sub-phrases to understand grammatical structure.

- Stemming and lemmatization: Stemming removes the last few characters to reduce a word to its stem word. For instance, caring could be reduced down to car. On the other hand, lemmatization takes into consideration the context before stemming a word to its base form. In this case, the word caring would be reduced to its contextual stem care.

- Stopword removal: Locates common words with minimal semantic weight and removes them.

- Word sense disambiguation: Identifies the meaning of a word in a particular context.

- Named Entity Recognition: This extracts and identifies key information from a body of text.

- Text classification: Classifies numerous types of text documents into different categories.

The ability to achieve these tasks and understand language makes NLP an essential component for the following use cases:

- Virtual assistants such as Apple’s Siri or Amazon’s Alexa

- Chatbots similar to ChatGPT and Jasper

- Search engines like Google and Bing

- Predictive text

- Automatic summarization

- Translations

- Spelling and grammar checker

- Organizing clinical and healthcare documentation

- Credit scoring

- Resume evaluation

3. Deep learning

IBM, a leading tech company, defines deep learning models as:

“Neural networks [that] attempt to simulate the behavior of the human brain…allowing it to “learn” from large amounts of data.”

These incredible neural networks use an immense amount of complex training data, referred to as big data, to teach artificial intelligence models about their functions.

These deep learning algorithms utilize multiple layers found within deep neural networks that enable sophisticated learning and decision-making without human intervention. These layers consist of:

- Input layer: This layer is the initial input provided for the AI system.

- Hidden layers: In between the input and output are these complex layers that perform the computation for a task. There can be multiple hidden layers, each performing different functions.

- Output layer: The output layer creates the final result of the initial input.

The above image represents an artificial neural network that features numerous nodes, sometimes referred to as neurons, within the layers that communicate data with the next layer. These inputs between neurons are weighted in regard to the value of the required task, which is then processed through a node’s activation function, which decides whether this data should be sent to the next layer.

While on the surface, deep learning shares a few common features with machine learning, there is one clear distinction: the learning process is entirely independent of human intervention.

The below table highlights some of the other key differences between machine learning and deep learning.

There are countless use cases for these deep learning algorithms, including:

- Computer vision

- Self-driving cars

- Medical assessments

- Image classification

- Fraud detection

- Credit score calculators

- Pharmaceutical drug discovery

- Chatbots

4. Robotics

When the general public thinks about artificial intelligence, images of robots with metallic exoskeletons are most likely their first thought. They don’t think about the deep neural networks and machine learning algorithms. However, most AI does not have a physical form but rather occupies a digital space.

Nonetheless, there are many examples of real-world robots used to control physical objects. There are six different types of robots which are:

- Autonomous Mobile Robots (AMRs): These are automated robots that are able to traverse and understand their environment. This is done through a combination of sensors and cameras that transmit data to an artificial intelligence processor that will then make an informed decision.

- Articulated robots: These are often referred to as robotic arms and are used to replicate the motions and actions of a human arm.

- Humanoid: In essence, there are many similarities between humanoid robots and AMRs. However, these robots are encased in a human-like shell and are used to replicate human actions.

- Cobots: Cobots are used to collaborate and work with humans to aid in difficult, strenuous, or dangerous tasks.

- Hybrids: These are a combination of different types of robotics to create a hybrid model. For instance, a bomb disposal unit will predominantly be an AMR robot but will be infused with a robotic arm to aid with the disarmament.

Unlocking the potential of the subsets of AI

Now that we understand what the four distinct subsets of AI are, it is now time for you to find out the many potential benefits of artificial intelligence for your business. From speech recognition systems to writing assistants to automation to AI-powered images and video editing, the opportunities resulting from the subsets of artificial intelligence are seemingly endless.

Check out our extensive app library to try some of the most innovative and revolutionary tools to be found on the web. With apps that cover all four subsets of AI in multiple categories, there is bound to be an app that will benefit your business.

Daniel Coombes

Daniel is a talented writer from the UK, specializing in the world of technology and mobile applications. With a keen eye for detail and a passion for staying up-to-date with the latest trends in the industry, he is a valuable contributor to TopApps.ai.

Recent Articles

Learn how to use advanced search tools, newsletters, and reviews to uncover the perfect AI-focused podcast for you.

Read More

Explore the top beginner-friendly AI podcasts. Our guide helps non-techies dive into AI with easy-to-understand, engaging content. AI expertise starts here!

Read More

Explore the features of The AI Podcast and other noteworthy recommendations to kick your AI learning journey up a notch. AI podcasts won’t...

Read More