Published on: April 20, 2023

What Enables Image Processing and Speech Recognition in Artificial Intelligence?

Author: Inge von Aulock

Artificial intelligence (AI) has made remarkable strides in recent years, with impressive advancements in image processing and speech recognition. From facial recognition software to virtual assistants, these technologies have become a part of our daily lives.

But what exactly enables these feats of AI? What technologies are behind the ability of machines to recognize and interpret images and speech? In this article, we will explore what image processing and speech recognition are and how they work, and the artificial neural networks they use before taking a look at some real-world use cases.

What is image processing and how does it work?

To sum it up, image processing is an analysis of a digital image to receive data insights. This is often described as machine vision. From this pattern recognition, we are able to use the image and change it in many ways:

- Image recognition: This refers to the ability to detect and recognize what an image is of.

- Object detection: This refers to detecting and identifying specific objects in an image.

- Image classification: This refers to assigning a label to an image. This means images can be easily organized.

- Increasing quality: A large part of image processing focuses on increasing the quality of an image. This is because it can only happen once the features of the image have been identified using AI.

What type of AI is used in image processing?

Machine learning artificial intelligence is used for image processing. This type of AI technology uses complex algorithms so computers are able to analyze data and recognize patterns.

In modern image processing, ‘deep learning’ algorithms, a subset of machine learning, are used. This means that once computers are fed big data, they will be able to teach themselves how to recognize and differentiate between data sets. This helps build deep neural networks, which become more accurate over time.

For example, a computer will be fed thousands and thousands of images of dogs as its training data, and it will teach itself to be able to recognize dogs in images that have more than one object in it.

This is used alongside convolutional neural networks (CNN). These break images down into their pixels and labels each one. It performs mathematical operations that produce predictions about what the computer can see. It will then check its own accuracy. It continues to run these convolutions in real-time until the predictions become more accurate.

Think of it like a computer squinting to make out something in the distance. As it moves closer (as the predictions become more accurate), the object becomes clearer.

Real-world use cases of image processing

So, how are these recognition systems used in day-to-day tasks? Well, you’ll be surprised at how often this technology is being used without you even noticing. Sure, they are becoming increasingly common in new tech like self-driving cars, but let’s take a look at a few examples already in practice.

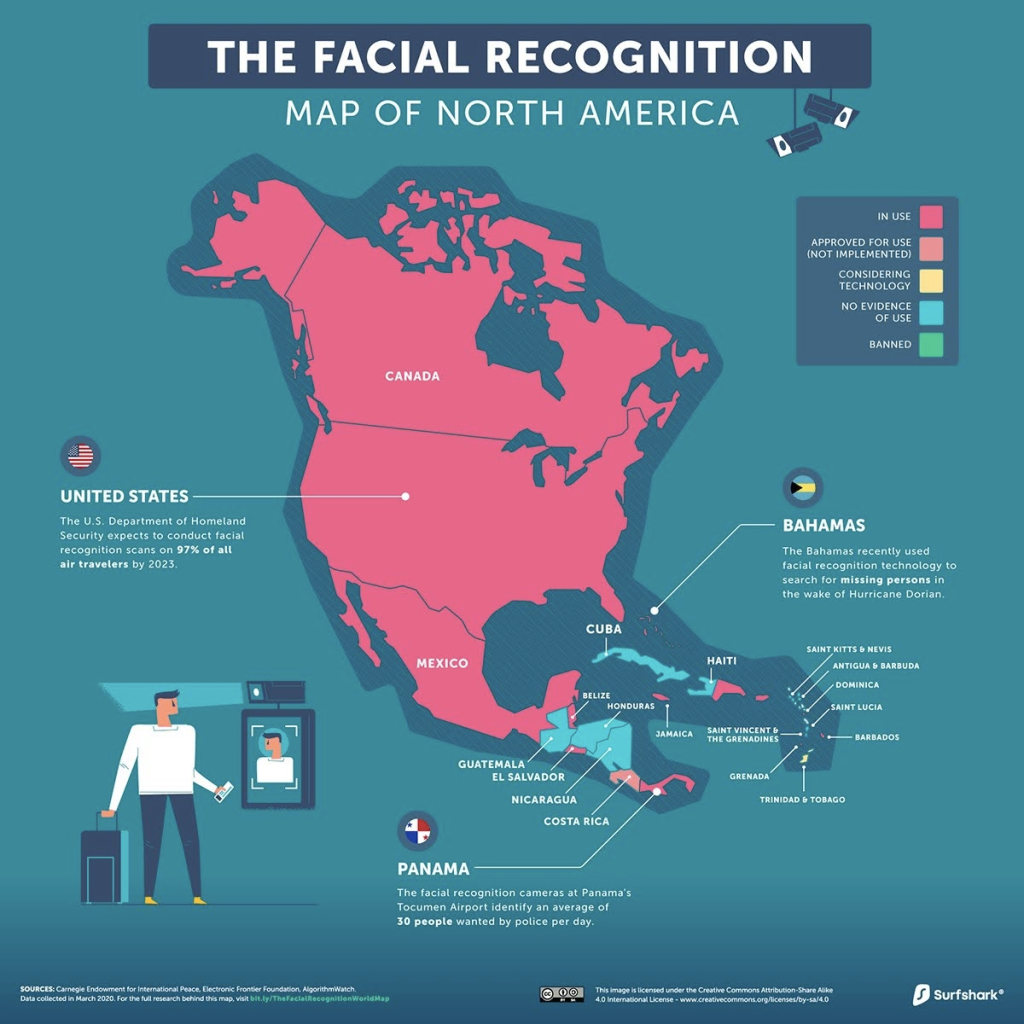

Facial recognition

By using AI, face recognition technology can identify people from their features in photos and videos. The algorithm described above works in the same way by breaking down a face into segments. It first identifies human eyes, then eyebrows, nose, and mouth, until it can confirm it is seeing a human face.

The features can then be compared to hundreds of thousands of faces from various databases until a match is found. This is called character recognition. This is used in:

- CCTV surveillance

- Biometric identification (like using your face to open your phone)

- Issuing identity documents like passports

- Border checks

- Computer vision, and more

Healthcare

This technology can also be used to analyze scans and streamline medical imaging. There are a number of benefits to using this technology in healthcare:

- Reducing misdiagnosis as human error is not an issue

- Reducing the workload of doctors and nurses

- Improved accuracy and automation

By identifying abnormalities, fewer issues will go undetected. Just this year, researchers at MIT developed an AI that can detect lung cancer from CT scans.

License plate identification

Ever been caught out by a speed camera? Of course not. You’re a responsible driver. But if you were, you’d be contacted via a letter to your home, addressed to you. All of this information is gained just by your driver’s license.

The cameras with AI technology recognize the number plate and translate it into readable characters. This can then be run through databases to find a match with a vehicle and its owner.

What is speech recognition and how does it work?

Speech recognition is simply the ability of a computer to identify sounds and words from the human language. Once the language-understanding computer has recognized human speech, it can:

- Identify sentiment and emotion: Computers are able to recognize emotion behind speech and text through analysis.

- Respond: Believe it or not, computers can now respond and converse with humans.

- Transcribe: Computers can transcribe speech to text and text to speech.

What type of AI is used in speech recognition?

The AI technology responsible for speech recognition is called natural language processing (NLP). This AI breaks language down into words, and then the computer can analyze the relationship between the words using deep learning algorithms.

The algorithms used for speech recognition are called recurrent neural networks (RNN), which are specifically designed for processing sequential data such as speech. RNNs are trained on massive amounts of audio data to learn the patterns and structures of human speech, and they can then use this knowledge to accurately transcribe spoken words.

As with image processing, the accuracy of speech recognition software improves over time as the algorithms continue to learn and adapt.

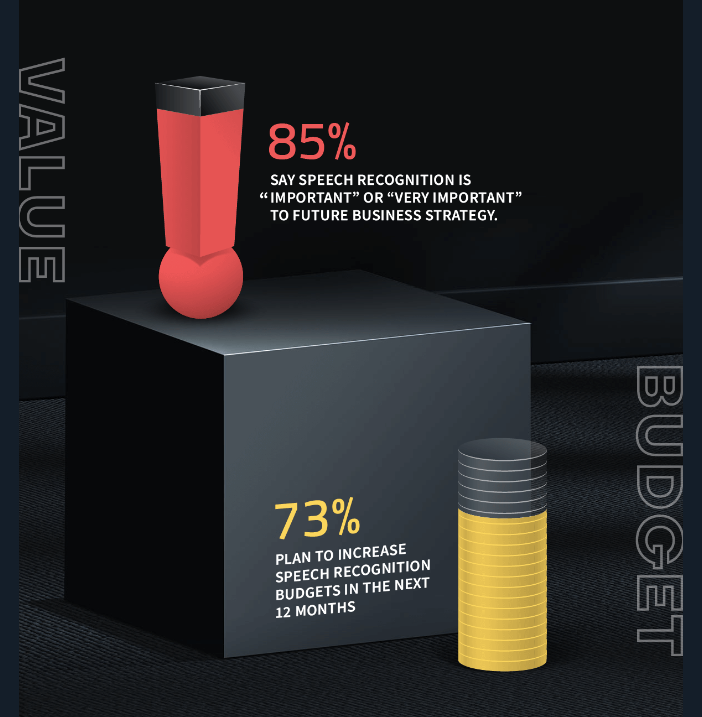

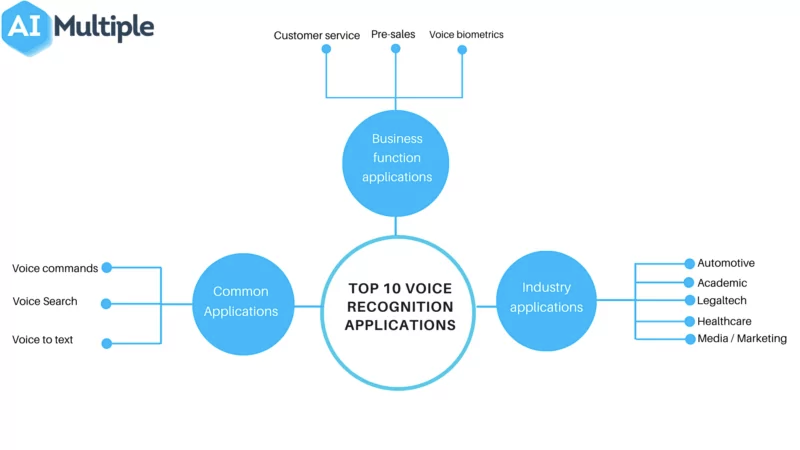

Real-world use cases

Speech recognition applications span a huge number of industries. As the technology continues to improve, its use cases are expanding beyond just personal use and entertainment.

In fact, this technology has found its way into a variety of real-world scenarios, revolutionizing industries from healthcare to finance. Let’s take a look at just a few.

Virtual assistants

Voice recognition apps like Siri depend on speech recognition technology. By listening to your voice and transcribing it into text, the computer is able to understand a request and respond with an answer.

These assistants learn from their mistakes. For example, have you ever had to tell Alexa to stop playing a song because it wasn’t what you asked for? The AI would have learned from this, making its help more accurate over time.

Chatbots

Customer service has had a glow-up. The use of speech recognition in chatbots means that a large part of dealing with clients and customers can be automated. Recent developments have meant that it’s now difficult to tell whether you’re speaking to a human or a bot.

Social media listening

Want to find out what people are saying about your brand? It’s no longer a mystery. By using speech recognition technology, you can monitor the tone and sentiment behind conversations happening about your company.

This can be used to direct marketing campaigns and interact with your following in an appropriate way.

Conclusion

The advancements in image processing and speech recognition have revolutionized the way we interact with machines and the world around us. From facial recognition to virtual assistants and chatbots, these technologies are becoming an increasingly integrated part of our daily lives.

As AI systems continue to evolve and improve, they will open up new possibilities and opportunities in a wide range of industries.

However, it’s important to also consider the ethical implications of these technologies, such as privacy concerns and potential biases. As we continue to explore the potential of AI, we must also work towards responsible development and implementation that considers the impact on human intelligence and society as a whole.

Inge von Aulock

I'm the Founder & CEO of Top Apps, the #1 App directory available online. In my spare time, I write about Technology, Artificial Intelligence, and review apps and tools I've tried, right here on the Top Apps blog.

Recent Articles

Learn how to use advanced search tools, newsletters, and reviews to uncover the perfect AI-focused podcast for you.

Read More

Explore the top beginner-friendly AI podcasts. Our guide helps non-techies dive into AI with easy-to-understand, engaging content. AI expertise starts here!

Read More

Explore the features of The AI Podcast and other noteworthy recommendations to kick your AI learning journey up a notch. AI podcasts won’t...

Read More